Abstract

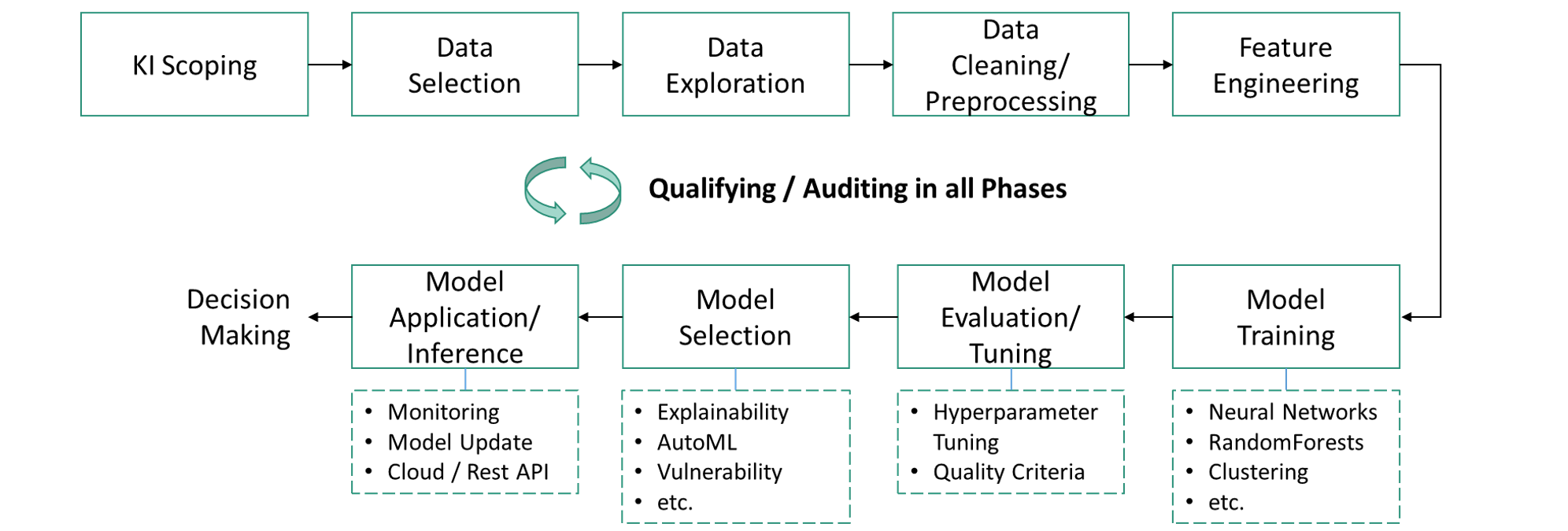

For small and medium-sized enterprises (SMEs), the manual part of quality inspection in industrial production is very high. By using artificial intelligence (AI), companies hope to automate quality inspection and gain economic benefits. Machine learning (ML) methods such as artificial neural networks are coming to the fore. These promise high performance and are trained entirely on data, without the need for explicit, human-made rules. However, it is precisely the self-learning property that leads to concerns among companies regarding reliability or acceptance and makes the evaluation of ML methods more difficult, in contrast to conventional software and rule-based AI systems. In addition, SMEs often lack the human resources and expertise to test the suitability of third-party AI systems. The aim of the project is therefore to develop a software framework for the simplified qualification and auditing of ML-based AI systems in industrial quality inspection. The framework consists of a process model including software-supported methods and tools. Since the development of AI systems goes through several phases and development decisions with an impact on the result are made in each phase, qualification covers all phases. To this end, the framework supports the determination and formulation of test and evaluation criteria along all phases. These criteria are recorded in a so-called assurance case (AS), which is used by the framework to support the auditing of the AI system. The framework has a modular design, enabling simple integration and expansion of testing and auditing modules. The framework is intended to enable SMEs in particular to assess the performance of AI systems even without their own AI specialists.

Framework for Qualifying AI Systems in Industrial Quality Inspection (AIQualify)

Framework for Qualifying AI Systems in Industrial Quality Inspection (AIQualify)